Artificial intelligence is no longer just about building models in a research environment. To create real-world impact, machine learning (ML) models must be deployed, monitored, and continuously improved in production. That’s where MLOps (Machine Learning Operations) comes in. Much like DevOps revolutionized software delivery, MLOps focuses on streamlining the end-to-end lifecycle of ML systems—from data ingestion to model deployment, monitoring, and retraining.

At the heart of any successful MLOps implementation lies its architecture. The architecture defines how data, models, infrastructure, and teams interact to support scalable, reliable, and reproducible ML workflows.

Why MLOps Architectures Matter

Without a strong architectural foundation, organizations often face challenges such as:

- Model drift: Deployed models degrade over time due to changing data distributions.

- Scalability issues: Moving from prototyping on a laptop to production-level systems is often non-trivial.

- Collaboration gaps: Data scientists, ML engineers, and DevOps teams often operate in silos.

- Reproducibility challenges: Re-running experiments and comparing results can be messy without standardization.

A well-designed MLOps architecture addresses these challenges by providing structure, automation, and scalability.

Core Components of an MLOps Architecture

- Data Layer

- Data ingestion (batch and streaming sources)

- Data validation and quality checks

- Feature engineering pipelines

- Feature stores for reusability

- Experimentation Layer

- Version-controlled notebooks and scripts

- Experiment tracking (parameters, metrics, artifacts)

- Reproducible environments with containers

- Training and Model Management

- Automated training pipelines (triggered by new data or code)

- Model versioning and registry

- Hyperparameter optimization

- Deployment Layer

- Deployment strategies (batch inference, online APIs, edge deployment)

- CI/CD pipelines for ML models

- Infrastructure as Code (IaC)

- Monitoring and Feedback

- Model performance monitoring (latency, accuracy, drift detection)

- Logging and observability

- Feedback loops for automated retraining

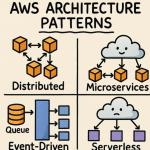

Types of MLOps Architectures

- Manual/Ad-hoc Architecture (Level 0)

- Data scientists train models locally and hand them off for deployment.

- Low automation, high manual effort.

- Useful for early experimentation, but not scalable.

- Automated Training & Deployment (Level 1)

- CI/CD pipelines for ML workflows.

- Automated retraining when new data arrives.

- Model registry integrated into deployment pipelines.

- Continuous Training and Monitoring (Level 2+)

- Full ML lifecycle automation: data ingestion → training → deployment → monitoring.

- Feedback loops for continuous learning.

- Strong governance, reproducibility, and compliance.

Example MLOps Architecture Pattern

A modern enterprise-grade architecture might look like this:

- Data Ingestion → Kafka or Spark Streaming

- Feature Engineering → Feature Store (Feast, Tecton)

- Experiment Tracking → MLflow or Weights & Biases

- Model Registry → MLflow Model Registry, SageMaker Model Registry

- CI/CD Pipelines → GitHub Actions, Jenkins, or GitLab CI

- Deployment → Kubernetes with KFServing or Seldon

- Monitoring → Prometheus, Grafana, EvidentlyAI

This modular, layered approach ensures scalability and flexibility while enabling teams to plug in best-of-breed tools.

Best Practices for Designing MLOps Architectures

- Design for modularity: Allow swapping out tools without disrupting the pipeline.

- Emphasize automation: Manual interventions lead to errors and bottlenecks.

- Prioritize monitoring: Treat models like software services—measure, alert, and adapt.

- Ensure reproducibility: Use version control for data, code, and models.

- Build for collaboration: Create shared platforms that bridge data science, ML engineering, and DevOps teams.

MLOps architectures aren’t one-size-fits-all. The right design depends on an organization’s maturity, data ecosystem, and business needs. Start small—perhaps with automated deployment pipelines—then scale toward full lifecycle management with monitoring and continuous training.

Ultimately, a robust MLOps architecture transforms ML from isolated experiments into production-ready, scalable, and business-critical systems.