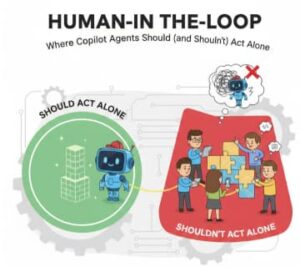

In the fast-evolving world of artificial intelligence, the term “Copilot agent” has become almost ubiquitous. These intelligent assistants—whether guiding developers in code completion, helping customer service teams respond to emails, or assisting radiologists interpreting scans—are transforming how work gets done. But as with any powerful tool, the key question isn’t just what these agents can do, but when they should act alone and when humans must stay in the loop.

This is where the concept of Human-in-the-Loop (HITL) becomes essential. It’s not about limiting AI; it’s about responsible collaboration between humans and machines.

What Is Human-in-the-Loop (HITL)?

At its core, HITL refers to systems where a human interacts with, supervises, or reviews an AI’s output before final action is taken. This isn’t just “a safety check”—it’s a fundamental design choice for trust, accuracy, and legal compliance.

HITL is especially important in domains where errors can be costly: medicine, law, safety systems, financial decisioning, autonomous vehicles, and more.

In contrast, there are contexts where Copilot agents can act autonomously—if the risk is low, the outcomes are reversible, and performance is reliable.

Why It Matters: The Balance Between Autonomy and Oversight

AI researchers and product leaders talk about automation bias (over-trusting AI recommendations) and alert fatigue (human disengagement due to frequent prompts). The sweet spot is not flipping a switch between “AI only” and “Human only,” but designing workflows where both parties amplify each other’s strengths.

Humans are great at:

- Complex judgement

- Ethical reasoning

- Contextual nuance

- Handling unexpected edge cases

AI agents are great at:

- Repetitive pattern recognition

- Processing large datasets

- Speedy computations

- Real-time predictions

Together, they create collaborative intelligence.

When Copilot Agents Should Act Alone

Here are contexts where you can safely let Copilot agents operate autonomously:

✅ Low-Risk, Reversible Tasks

If mistakes can be undone and consequences are minimal.

Examples:

- Auto-tagging images in a photo library

- Suggesting email subject lines

- Sorting customer support tickets into categories

✅ Highly Standardized and Predictable Workflows

Where patterns are consistent and well-defined.

Examples:

- Formatting documents

- Routine code formatting rules

- Data normalization in structured fields

✅ High-Volume Repetitive Work

Tasks that drain human resources but don’t require creativity or emotion.

Examples:

- Transcribing meeting notes

- Auto-response to status updates

- Batch transformations

🧪 Conditions for Full Autonomy

Before enabling full autonomy for a Copilot agent, ensure:

- 95%+ accuracy in validation tests

- Clear rollback mechanisms

- Monitoring dashboards (for performance drift)

- Risk thresholds defined

When HITL Is Essential: Copilot Agents Shouldn’t Act Alone

Certain domains demand human oversight due to risk, ethics, accountability, or legal requirements.

🚨 Safety-Critical Decisions

Medical diagnostics, autonomous driving, or command-and-control systems must include human checkpoints. A misclassified tumor or a wrong steering suggestion could be life-threatening.

⚖️ Legal and Ethical Judgment

AI may replicate patterns but lacks human ethics.

Examples:

- Evaluating loan eligibility (legal fairness)

- Content moderation for nuanced social issues

- Legal contract interpretation

🤖 Ambiguous or Novel Scenarios

AI struggles when inputs are outside its training distribution.

If the data is unfamiliar—new regulatory requirements, unique customer complaints, or cultural interpretation—humans need to lead.

🧠 Creative Decision Making

Tasks involving originality, artistry, or strategy require human vision.

Examples:

- Designing product strategy

- Interpreting artistic direction

- Editorial choices in journalism

Practical Technical Steps for Implementing HITL with Copilot Agents

Here’s a simple, step-by-step technical blueprint you can follow when building systems that balance autonomy with human oversight.

Step 1: Define Decision Taxonomy

Classify tasks into:

- Autonomous safe

- Augmented (AI suggests, human approves)

- Human only

Create a matrix with:

| Task | Risk Level | AI Role | Human Role |

|---|---|---|---|

| Email sorting | Low | Autonomous | Monitor |

| Medical diagnosis | High | Suggest | Approve |

| Creative writing | Medium | Assist | Human edits |

Step 2: Create Confidence Thresholds

Configure your AI system to tag outputs with confidence scores.

Example:

{"confidence": 0.92,"output": "Positive case – likely 90% match"}Set rules like:

If confidence > 0.95 → Auto-executeIf 0.75 < confidence < 0.95 → Human reviewIf confidence < 0.75 → Escalate to human only

Step 3: Build Human Review Interfaces

Create dashboards that allow humans to:

- View flagged items

- Approve / edit AI output

- Provide feedback to the model

Tools like Jira workflows, Slack reviews, UI review panels, or custom tools can help.

Step 4: Feedback Loop for Retraining

Every human correction should feed back into your system:

Human edits → Stored as labeled data → Retrain model monthly

This improves accuracy and reduces long-term human load.

Step 5: Monitor and Audit

Set up real-time metrics for:

- False positives / negatives

- Human override rates

- Time to review

- Drift indicators

Use tools like Grafana, Kibana, or custom logs to visualize.

Why HITL Is Not a Compromise—It’s a Design Philosophy

Human-in-the-Loop isn’t a safety net—it’s a strategic advantage. It ensures AI systems remain trusted, fair, ethical, and adaptable. Copilot agents free humans from repetitive drudgery, while humans ensure AI stays grounded in values we care about.

In the end, the best systems are not the ones where AI replaces humans—but where AI helps humans be smarter, faster, and more insightful than either could be alone.