Cloud computing is all about flexibility, efficiency, and growth—and scalability is at the heart of it. Whether you’re building a startup MVP or running enterprise-grade applications, your system should be able to scale based on changing demands.

In this post, we’ll dive into three essential scalability patterns—Horizontal Scaling, Auto-Scaling, and Queue-Based Load Leveling—and explore how they’re implemented in AWS and Azure.

1. 🌐 Horizontal Scaling (Scale Out/In)

What it is:

Rather than upgrading a single server (vertical scaling), horizontal scaling involves adding or removing multiple servers (scale-out or scale-in). This allows your system to handle more traffic without overloading a single machine.

🔧 AWS Example:

- Amazon EC2 Auto Scaling Groups (ASG): Add or remove EC2 instances based on demand.

- Elastic Load Balancer (ELB): Distributes traffic across healthy instances to ensure smooth performance.

🔧 Azure Example:

- Virtual Machine Scale Sets (VMSS): Automatically manage a group of load-balanced VMs.

- Azure Load Balancer or Application Gateway: Distribute traffic to ensure even load distribution.

🧠 Best Practice:

Keep your instances stateless so any one of them can handle incoming requests interchangeably. Use shared storage or databases for persistence.

2. 📈 Auto-Scaling

What it is:

Auto-scaling adjusts computing resources automatically based on real-time metrics like CPU usage, memory, or custom thresholds. It ensures optimal performance and cost-efficiency.

🔧 AWS Example:

- Auto Scaling Groups + CloudWatch Alarms: Automatically scale EC2 instances when CPU usage exceeds a threshold.

- AWS Lambda: Automatically scales without any infrastructure management.

🔧 Azure Example:

- Azure App Service Plan Auto-Scaling: Scale web apps based on CPU/memory or custom rules.

- Azure Functions: Serverless platform that scales functions dynamically based on event triggers.

🧠 Best Practice:

Set smart scaling policies—avoid scaling too frequently (thrashing) or too slowly (lag). Use cooldown periods and predictive scaling when possible.

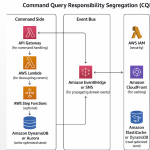

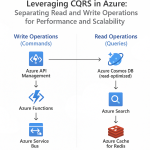

3. 📬 Queue-Based Load Leveling

What it is:

This pattern introduces a message queue between your frontend and backend services. Incoming requests are queued and processed asynchronously by workers, smoothing out spikes and improving system resilience.

🔧 AWS Example:

- Amazon SQS (Simple Queue Service): Decouple components and store messages temporarily.

- AWS Lambda or EC2 workers: Pull messages from the queue and process them.

🔧 Azure Example:

- Azure Queue Storage or Service Bus: Queue messages for backend processing.

- Azure Functions or WebJobs: Triggered by queues to handle messages on-demand.

🧠 Best Practice:

Ensure your queue consumers are idempotent, so duplicate message processing doesn’t lead to inconsistent results. Monitor queue length to adjust worker scaling.

📊 Choosing the Right Pattern

| Pattern | Use When | Tools (AWS) | Tools (Azure) |

|---|---|---|---|

| Horizontal Scaling | You need to increase capacity without downtime | EC2 + ASG + ELB | VMSS + Load Balancer |

| Auto-Scaling | Traffic/load fluctuates based on time or usage | Auto Scaling Groups, Lambda | App Service Auto-Scaling, Functions |

| Queue-Based Load Leveling | Back-end processing is slow or bursty | SQS + Lambda | Queue Storage + Azure Functions |

Cloud scalability isn’t just about adding more power—it’s about intelligent architecture that adapts to demand, manages cost, and ensures high availability. By leveraging these patterns in AWS and Azure, you can build systems that grow with your users—automatically, efficiently, and reliably.

💡 Start small, scale smart. Whether you’re running on EC2 or Azure Functions, the right scalability pattern makes all the difference.