The rapid evolution of artificial intelligence has ushered in a new class of systems often referred to as copilot agents. These agents are not fully autonomous decision-makers, nor are they passive tools they sit in the middle, augmenting human capability by assisting with tasks, providing insights, and automating workflows while keeping humans in the loop. From coding assistants to enterprise productivity tools, copilot agents are becoming foundational to how we interact with software.

Understanding their architecture reveals why they are so powerful and what challenges come with building them.

1. What Is a Copilot Agent?

A copilot agent is an AI-powered system designed to collaborate with users in real time. Unlike traditional automation systems that operate independently, copilots are context-aware assistants that respond dynamically to user input, preferences, and goals.

They typically:

- Interpret natural language instructions

- Access external tools and data sources

- Generate or transform content

- Adapt based on user feedback

The architecture behind these capabilities is layered and modular, allowing flexibility, scalability, and continuous improvement.

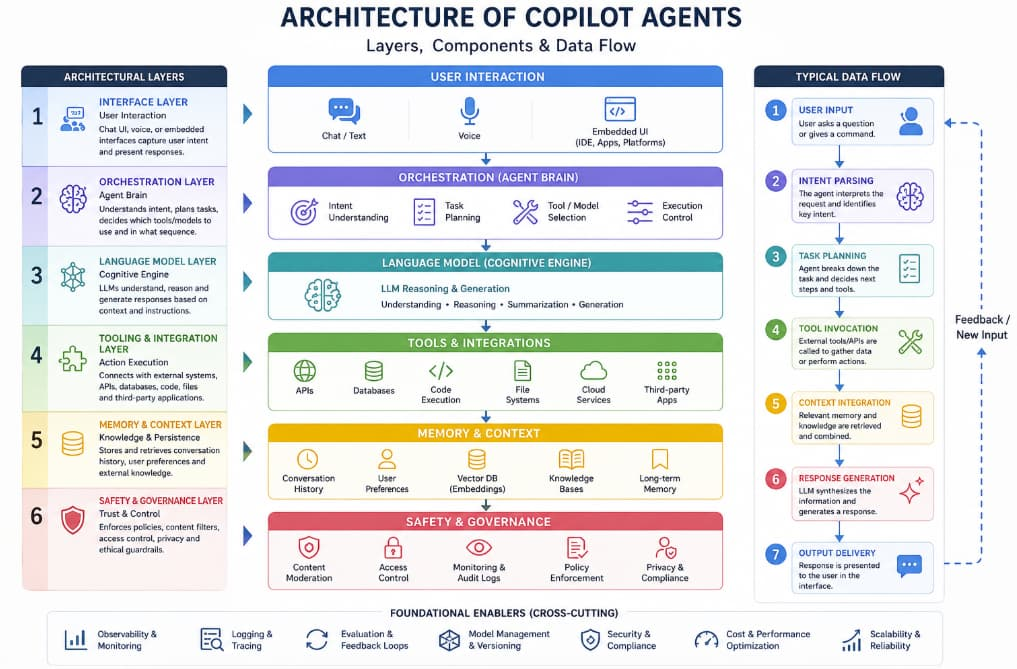

2. Core Architectural Layers

A well-designed copilot agent consists of several interconnected layers, each responsible for a specific function.

a. Interface Layer (User Interaction)

This is where humans interact with the copilot. It may take the form of:

- Chat interfaces

- Voice assistants

- Embedded UI components (e.g., inside an IDE or productivity app)

The interface layer captures user intent in natural language and presents outputs in a digestible format. It also manages conversational context, ensuring continuity across interactions.

b. Orchestration Layer (Agent Brain)

The orchestration layer is the central nervous system of the copilot. It determines:

- What the user is asking

- Which tools or models to invoke

- How to sequence tasks

This layer often uses techniques such as:

- Prompt engineering

- Task planning

- Decision trees or agent frameworks

Modern systems may implement multi-step reasoning, where the agent breaks down a complex task into smaller subtasks and executes them sequentially.

c. Language Model Layer (Cognitive Engine)

At the heart of most copilot agents lies a large language model (LLM). This component is responsible for:

- Understanding user input

- Generating responses

- Performing reasoning tasks

The LLM can be fine-tuned or augmented with additional context to improve domain-specific performance. It acts as the “thinking engine” of the system.

However, raw LLM output is rarely sufficient on its own. That’s where other layers come in.

d. Tooling and Integration Layer

Copilot agents gain real power when they can interact with external systems. This layer connects the agent to:

- APIs

- Databases

- File systems

- Third-party applications

For example, a coding copilot might:

- Fetch repository data

- Run code

- Query documentation

Tool usage is often mediated through structured function calls or plugins, ensuring that the agent interacts safely and predictably with external systems.

e. Memory and Context Layer

To provide meaningful assistance, copilots must remember context. This layer handles:

- Short-term memory (conversation history)

- Long-term memory (user preferences, past interactions)

- External knowledge retrieval

Techniques like vector databases and embeddings are commonly used to store and retrieve relevant information efficiently. This enables the agent to maintain continuity and personalization over time.

f. Safety and Governance Layer

As copilots become more capable, ensuring responsible behavior becomes critical. This layer enforces:

- Content moderation

- Access control

- Data privacy policies

- Ethical constraints

It may include filters, rule-based systems, and monitoring tools to prevent harmful or unintended outputs.

3. Data Flow in a Copilot Agent

To understand how these layers interact, consider a typical workflow:

- User Input: A user asks a question or gives a command.

- Intent Parsing: The system interprets the request using the LLM.

- Task Planning: The orchestration layer determines required actions.

- Tool Invocation: External tools or APIs are called if needed.

- Context Integration: Relevant memory or documents are retrieved.

- Response Generation: The LLM synthesizes a response.

- Output Delivery: The result is presented to the user.

This pipeline may loop multiple times for complex tasks, enabling iterative refinement.

4. Design Patterns in Copilot Architectures

Several architectural patterns have emerged in building copilot agents:

a. Retrieval-Augmented Generation (RAG)

This pattern enhances LLM responses by retrieving relevant data from external sources before generating an answer. It improves accuracy and reduces hallucinations.

b. Tool-Using Agents

Instead of relying solely on language generation, these agents can:

- Execute code

- Query databases

- Perform calculations

This makes them more reliable for task execution.

c. Multi-Agent Systems

In more advanced setups, multiple specialized agents collaborate. For example:

- One agent handles planning

- Another executes tasks

- A third verifies results

This modular approach improves scalability and robustness.

d. Human-in-the-Loop Systems

Copilot agents are designed to keep humans involved. Users can:

- Approve or reject actions

- Provide corrections

- Guide the agent’s behavior

This ensures accountability and trust.

5. Challenges in Building Copilot Agents

Despite their promise, copilot agents come with significant challenges:

a. Context Management

Maintaining relevant context without overwhelming the system is difficult. Too little context leads to poor responses; too much increases cost and latency.

b. Reliability and Hallucination

LLMs can generate plausible but incorrect information. Combining them with tools and retrieval systems helps mitigate this issue.

c. Latency and Performance

Multi-step reasoning and tool usage can introduce delays. Optimizing response time while maintaining quality is a key engineering challenge.

d. Security Risks

Giving agents access to tools and data introduces potential vulnerabilities. Proper sandboxing and permission controls are essential.

e. User Trust

Users must feel confident that the copilot is accurate, safe, and aligned with their goals. Transparency and explainability play a major role here.

6. Future Directions

The architecture of copilot agents is still evolving. Some emerging trends include:

- Deeper personalization through long-term memory

- Proactive assistance, where agents anticipate user needs

- Cross-platform integration, enabling seamless workflows

- Improved reasoning capabilities, reducing reliance on human correction

As these systems mature, the boundary between “tool” and “collaborator” will continue to blur.

Copilot agents represent a significant shift in how humans interact with technology. Their architecture—built on layered systems combining language models, orchestration logic, tools, and memory—enables them to function as intelligent collaborators rather than passive utilities.

Designing effective copilot agents requires balancing power with control, intelligence with safety, and automation with human oversight. As organizations continue to adopt these systems, understanding their architecture is not just a technical necessity—it’s a strategic advantage.

The future of software is not just about what machines can do alone, but what humans and intelligent agents can achieve together.