In today’s cloud-native and microservices-driven world, Event Sourcing is gaining traction as a powerful architectural pattern for managing application state. Unlike traditional approaches that store only the current state of data, Event Sourcing stores every change to the application state as an immutable event. This pattern can enhance auditability, scalability, and resilience — especially when combined with the tools and services available in Microsoft Azure.

🔄 What Is Event Sourcing?

Event Sourcing is a design pattern where state changes are captured as a series of events rather than overwriting the current state. These events are immutable records of things that happened in your system — like OrderPlaced, ProductShipped, or UserRegistered.

Instead of storing the final result of an operation, you store the event that led to that result. The current state can then be reconstructed by replaying all events in the order they occurred.

Example:

Rather than having a row in a Users table that gets updated when a user changes their email, you store events:

UserRegisteredEmailChanged

To get the current email, you replay all events from the beginning.

☁️ Why Use Event Sourcing in Azure?

Azure provides a robust set of cloud-native services that make implementing Event Sourcing easier and scalable:

- Azure Event Hubs / Azure Service Bus: Capture and stream event data.

- Azure Cosmos DB / Azure Table Storage: Store the event log or snapshots.

- Azure Functions: Handle events and process side effects.

- Azure Event Grid: Notify subscribers of changes.

- Azure Blob Storage: Store serialized event data cost-effectively.

These services let you build a scalable, distributed event sourcing system with minimal infrastructure management.

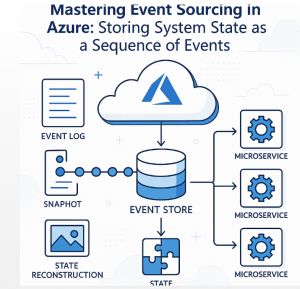

🧱 Architecture Overview

A typical Event Sourcing architecture in Azure might look like this:

- Command Input: Users send commands (e.g.,

PlaceOrder). - Command Handler: Validates and processes commands.

- Event Creation: Converts valid commands into events (e.g.,

OrderPlaced). - Event Store: Saves events in Cosmos DB, Blob Storage, or an append-only event log.

- Projections: Azure Functions or WebJobs consume events and build materialized views (e.g., current orders).

- Query Layer: Reads from these projections for fast and simple queries.

🛠️ Implementing Event Sourcing in Azure

1. Storing Events

Choose a reliable and scalable storage for your event log:

- Azure Cosmos DB (multi-region, low latency, JSON-friendly)

- Azure Blob Storage (cheap, ideal for cold/archived events)

- Azure Table Storage (lightweight and cost-effective)

Each event should include:

jsonCopyEdit{

"eventId": "guid",

"eventType": "OrderPlaced",

"timestamp": "2025-07-27T12:00:00Z",

"aggregateId": "order-123",

"data": {

"userId": "user-456",

"items": ["item-1", "item-2"]

}

}

2. Event Publishing

Use Event Hubs or Service Bus Topics to publish events to other services, allowing eventual consistency and loose coupling.

3. Projections / Read Models

Use Azure Functions to listen for new events and build read models in Cosmos DB, SQL Database, or Redis Cache — tailored for fast querying.

Example:

csharpCopyEdit[FunctionName("OrderProjection")]

public static async Task Run(

[CosmosDBTrigger("events", "orderEvents", ConnectionStringSetting = "CosmosDB")] IReadOnlyList<Document> input,

ILogger log)

{

foreach (var doc in input)

{

var eventData = JsonConvert.DeserializeObject<OrderPlacedEvent>(doc.ToString());

// Update the read model (Orders collection)

}

}

✅ Benefits

- Auditability: Every action is recorded permanently.

- Rebuildable State: You can rehydrate state from the event log.

- Flexibility: Create multiple projections from the same event stream.

- Scalability: Azure’s serverless and storage options scale with demand.

- Temporal Queries: Analyze how state changed over time.

⚠️ Challenges

- Event Versioning: As your schema evolves, you must handle multiple event versions gracefully.

- Replay Complexity: Replaying events can be time-consuming without snapshotting.

- Data Consistency: Requires eventual consistency in read models.

- Storage Growth: Event logs grow indefinitely; use archiving strategies.

🧩 Best Practices

- Use snapshots periodically to speed up state recovery.

- Version your events explicitly (e.g.,

UserRegisteredV1,UserRegisteredV2). - Store event metadata like timestamps and correlation IDs for observability.

- Separate command and query responsibilities (CQRS) to avoid mixing concerns.

- Use Azure Monitor and Application Insights to track system health.